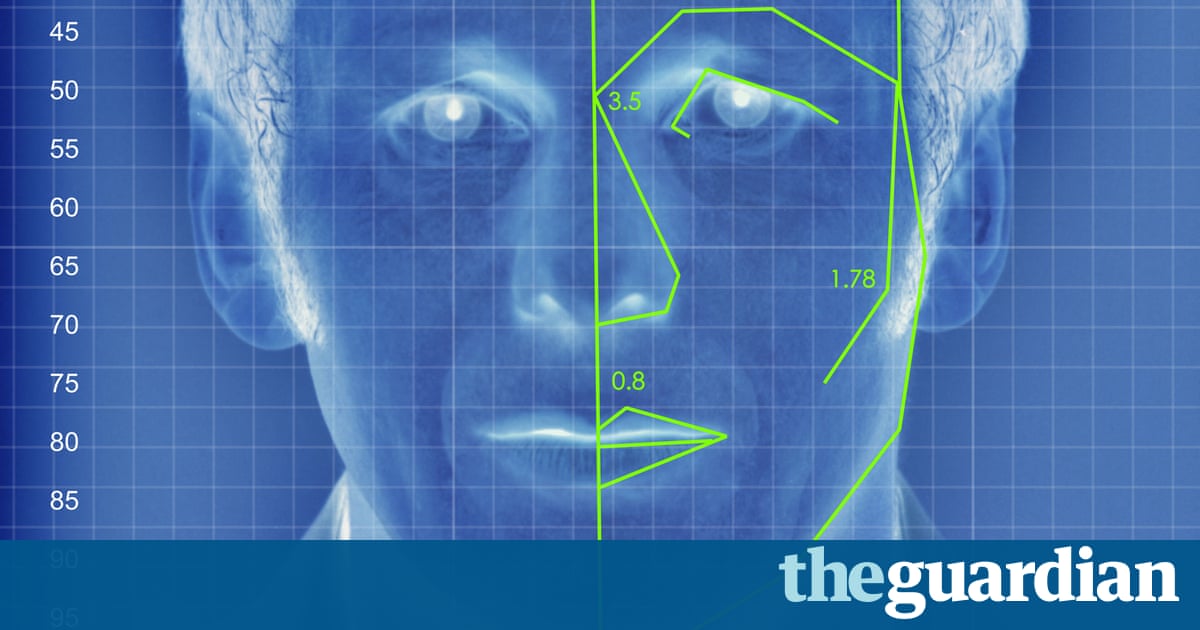

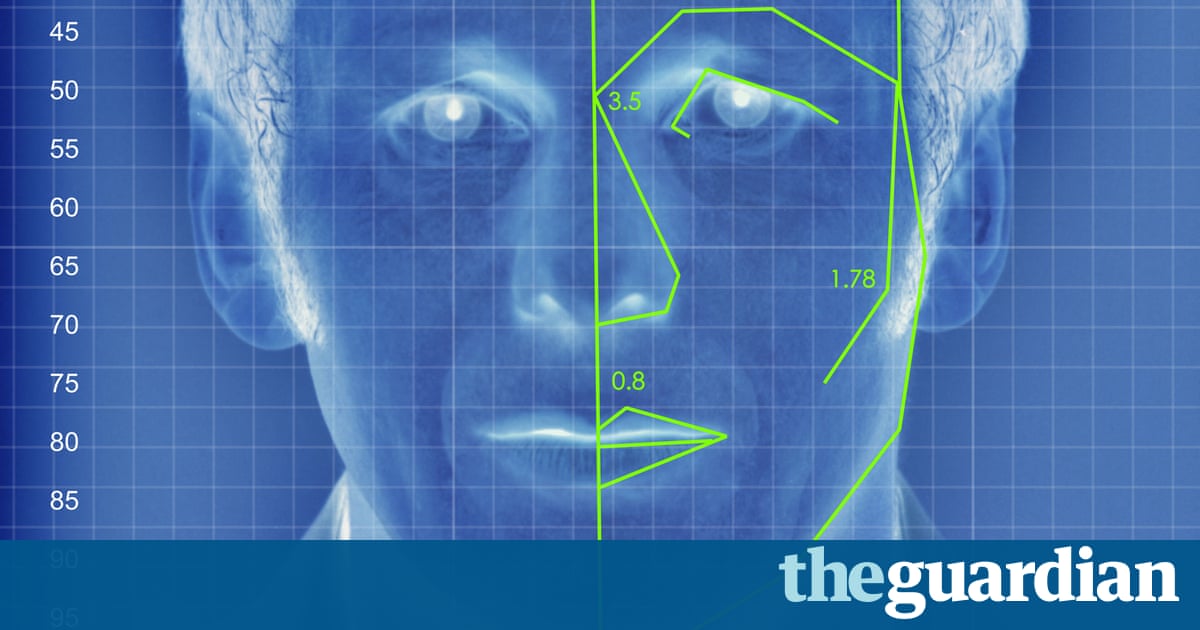

An algorithm deduced the sexuality of Individuals on a dating Website with up to 91% accuracy, raising tricky ethical questions

Artificial intelligence can accurately guess if people are gay or directly based on photos of the faces, according to new research that indicates machines can have considerably better “gaydar” than humans.

The research from Stanford University — that found that a computer algorithm could properly distinguish between gay and straight men 81% of the time, and 74 percent for women — has raised questions about the biological origins of sexual orientation, the integrity of facial-detection technology, and the capacity for this sort of software to violate people’s privacy or be abused for anti-LGBT purposes.

The machine intelligence tested in the research, which was published in the Journal of Personality and Social Psychology and first reported in the Economist, was based on a sample of more than 35,000 facial images that people publicly posted to a US dating site. The researchers, Michal Kosinski and Yilun Wang, extracted features from the images using “deep neural networks”, meaning a complex mathematical system that learns to analyze visuals based on a large dataset.

The research found that homosexual people tended to have “gender-atypical” features, expressions and “grooming styles”, essentially meaning homosexual men seemed more feminine and vice versa. The data also identified trends, including that homosexual men and homosexual women had noses that were longer, more narrow jaws and foreheads that were bigger and foreheads and larger 31, respectively compared to straight women.

Human judges performed much worse than the algorithm, accurately identifying orientation only 61 percent of their time for 54% and men for women. It was even more effective — 91% of the time with 83% and men with women after the software reviewed five images each person. Broadly, that means “faces contain much more information about sexual orientation than can be perceived and interpreted by the human brain”, the authors wrote.

The newspaper indicated that the findings provide “strong support” for the concept that sexual orientation stems from exposure to certain hormones before birth, meaning people are born homosexual and being queer isn’t a decision. The machine success rate for women also could support the notion that sexual orientation that is female is more fluid.

While the findings have clear limitations when it comes to gender and sexuality — people of colour were not included in the analysis, and there was no thought of transgender or bisexual people — the consequences of artificial intelligence (AI) are vast and alarming. With billions of facial images of people stored in government databases and on social media sites, the investigators suggested that public data could be used to discover people’s sexual orientation without their consent.

It’s easy to envision spouses employing the technology on spouses that they suspect are closeted, or teenagers using the algorithm on themselves or their peers. Frighteningly, authorities that continue to prosecute LGBT people could use the technology to target and outside populations. That means building this sort of software and publicizing it is itself contentious given concerns that it could encourage applications that are harmful.

But the writers argued that the technology already exists, and its capabilities are important that companies and governments can consider privacy risks and the need for regulations and safeguards to expose.

“It’s certainly unsettling. Like any new tool, if it gets into the wrong hands, it can be used for ill purposes,” said Nick Rule, an associate professor of psychology at the University of Toronto, who has published research on the science of gaydar. “If you can start profiling people based on their appearance, then identifying them and doing horrible things to them, that’s really bad.”

Rule argued it was still important to develop and test this technology: “What the authors have done here is to make a very bold statement about how powerful this can be. Now we know that we need protections.”

Kosinski was not immediately available for comment, but after publication of this article on Friday, he spoke to the Guardian about the integrity of this study and implications for LGBT rights. The professor is known for his work with Cambridge University on psychometric profiling, such as using Facebook data to make decisions about character. Donald Trump’s effort and Brexit supporters deployed similar tools to target voters, raising concerns about the expanding use of private data in elections.

In the Stanford study, the authors also noted that artificial intelligence could be used to explore links between facial features and a selection of other phenomena, such as political perspectives, psychological conditions or character.

This sort of research further raises concerns about the potential for scenarios like the science-fiction movie Minority Report, where people can be arrested based solely on the forecast that they will commit a crime.

“AI can tell you anything about anyone with enough data,” said Brian Brackeen, CEO of Kairos, a face recognition company. “The question is as a society, do we want to know?”

Brackeen, who stated the Stanford data on sexual orientation was “startlingly correct”, said there needs to be an increased emphasis on privacy and resources to prevent the misuse of machine learning as it becomes more widespread and innovative.

Rule speculated about AI being used to actively discriminate against people based on a machine’s interpretation of the faces: “We should all be collectively concerned.”

Contact the writer: [email protected]

Read more: http://www.theguardian.com/us